Artificial intelligence (AI) is no longer an abstract concept; it is quickly evolving as an integrated part of our daily lives. From virtual assistants and transportation to eCommerce and even healthcare, AI is continuing to expand its application. As investors, understanding the risks and opportunities associated with this new technology is vitally important.

Since the release of OpenAI’s ChatGPT in November 2022, investors have recognised the large impact generative AI1 could have on businesses’ productivity, growth, and innovation.

In a previous article, I outlined the AI investment opportunities that we see across chip, software and cloud providers. While we have a high conviction in the structural growth tailwinds of AI, as mentioned, we must also understand the risks associated with this expansive technology. Below we focus on some of the ESG risks associated with AI and the regulatory landscape, which is evolving rapidly.

What are the ESG risks of AI?

Despite the incredible benefits that AI can bring to businesses, it comes with significant social risks – privacy concerns, bias, discrimination, misinformation, ethical considerations, job displacement, safety and autonomy to name a few.

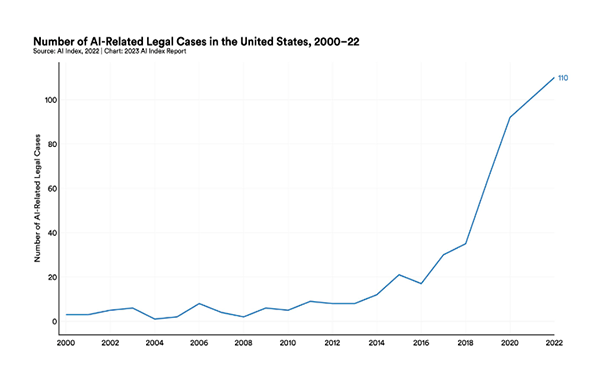

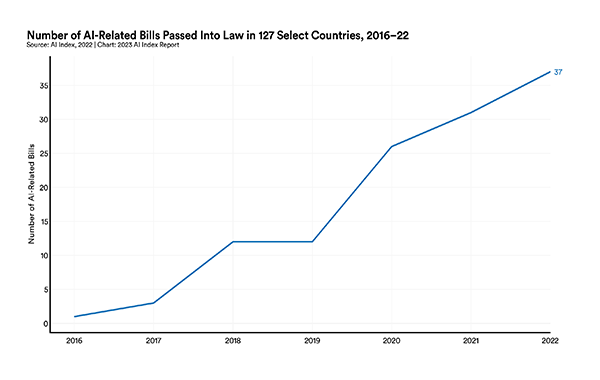

We’re already seeing rising cases of AI-related controversies, litigation and government intervention.

Source: https://aiindex.stanford.edu/report/

However, these risks should be viewed across short-, medium- and long-term horizons for a more detailed understanding of the potential impacts.

Short-term risks (within the next few years): These could include risks of misinformation, bias and inaccuracies, copyright infringement, and data breaches, security, and sovereignty. For example:

- AI has the potential to misdiagnose health issues.

- A technology platform may inadvertently distribute misinformation that could be discriminatory or fraudulent.

- AI data training sets may breach copyright, for example: The New York Times filed a lawsuit against OpenAI and Microsoft in December 2023, claiming “widescale copying” by their AI systems constitutes copyright infringement.2 Whether its allegations have merit remain to be seen.

Medium-term risks (Next 5 – 10 years): These could include risks of potential job loss, social manipulation, human rights violations, and company or economic disruptions. For example:

- Automated recruitment systems may have a discriminatory bias against specific groups of people based on the data they are fed and trained on.

Long-term risks (10 years and beyond): These could include environmental or existential risks. For example:

- The increasing use of AI could lead to significantly higher energy demands, driven by the growing utilisation of computing resources.

To mitigate these risks, we have seen an increase in regulation across many jurisdictions. It’s important that both developers and users of AI technology are factoring these regulatory expectations into their intended use cases, to minimise these potential ESG risks and the potential impacts on cash flows as well as regulatory fines.

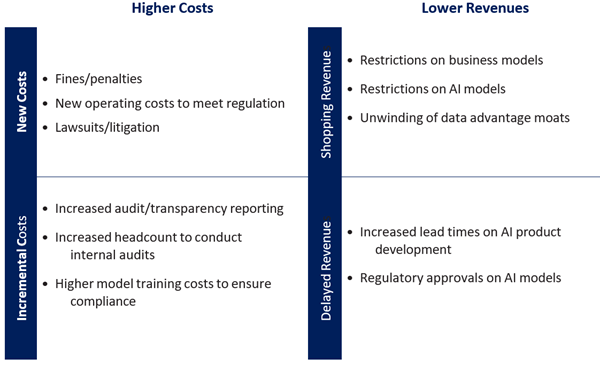

What impacts could regulatory risks have on companies?

AI regulation, if not managed well, could have a negative impact on cash flows for businesses. This may come in the form of higher costs, including potential fines and litigation or increased operating costs to meet regulatory requirements. Regulation could also lead to lower revenues, with constraints on new product developments as an example.

Decreases to cash flows

What will AI regulation look like?

AI regulation has long been discussed but lags developments in technology. Early regulation targeted the short- and medium-term risks – to protect basic data rights, fundamental rights, and democratic freedoms in certain regions. An example of this was seen in New York where AI technology was created and in use for résumé screening long before the AI hiring law (under which employers who use AI in hiring must inform candidates) was implemented in New York.

Each jurisdiction is approaching AI regulation differently, ranging from self-regulation and voluntary standards to strict rules with penalties for breaches.

European Union (EU):

In April 2021, the EU proposed a risk-based framework, the EU AI Act. The use cases of AI are categorised and restricted according to whether they pose an unacceptable, high or low risk to human safety and fundamental rights. The AI Act is progressing through the process of becoming law and will sit alongside the Digital Markets Act, the Digital Services Act, the Data Governance Act and the Data Act.

United Kingdom (UK):

The UK views AI as general-purpose technology. They have proposed a principles-based regulation approach. Industry-based regulators have flexibility in how they define their own regulations with the principles as guidance.

United States (US):

Prior to the recent executive order issued by President Biden in October 2023, the US encouraged companies to follow a set of “voluntary commitments”. The new executive order grants the government greater powers to supervise how AI models are built and tested.

Australia:

Australian ministers have commented that a new advisory body would work with government, industry and academic experts to legislate AI safeguards.

What’s next for AI and regulation?

As we have highlighted, one of the challenges of AI regulation is that the current approach is fragmented across different jurisdictions, making it more complex for companies creating or using AI to remain compliant. A way to overcome this would be the adoption of global AI standards to create consistency in how companies ensure responsible AI practices. We will continue to monitor the evolving AI regulation as well as the use of existing legislation for AI use cases such as the copyright infringement court case with The New York Times.3

What does this mean for investors?

Investors need to carefully consider the ESG risks associated with AI when making investment decisions. These risks, ranging from reputational damage to regulatory non-compliance and workforce impact, may influence the long-term growth and performance of companies. To help mitigate these risks, investors should have a detailed understanding of the companies they are investing in when it comes to their commitment to responsible AI practices. Rigorous due diligence is essential, involving thorough research and analysis of how companies approach and address social risks associated with AI. Companies that prioritise ethical considerations, engage with stakeholders and navigate regulatory landscapes effectively provide investors with greater confidence their investments are aligned with responsible business practices and are better positioned to withstand potential environmental, social and regulatory challenges associated with AI technologies.

Leading cloud and AI vendor Microsoft, a key exposure in our global equity portfolio, has already integrated initiatives to minimise the risk of regulation including implementing a principled approach to AI development, transparent reporting about its responsible AI learnings, an AI assurance program to bridge customer requirements with regulatory compliance, and internal governance teams integrated as part of leadership.

What should AI developers be working towards to minimise risk?

Expectations of companies developing AI can be summarised as:

- Active industry / community engagement on AI development

- Transparent reporting on developments and learnings

- Promote fairness and inclusivity in development of AI models and datasets

- Have adequate disclosures

- Internal governance teams to monitor AI risks (human oversight)

- Policies and processes to prevent AI risks like bias

- Implement AI explainability models to allow for external auditing

While some of the AI opportunities are being priced into stocks, there are still opportunities to be found, especially where companies can exploit the disruptive potential of AI. Our global equity strategies are positioned to benefit from AI growth trends through our exposure to the leading cloud and AI vendors of Microsoft, Alphabet and Amazon. ASML is well-positioned as a monopoly provider of leading-edge manufacturing equipment, while enterprise software vendors like SAP also stand to benefit.

1 Generative AI refers to algorithms that can be used to create new content based on the data they were trained on. This can include audio, images, code, text and more.

2 Boom in A.I. Prompts a Test of Copyright Law - The New York Times (nytimes.com)

3 Boom in A.I. Prompts a Test of Copyright Law - The New York Times (nytimes.com)

Adrian Lu is an Investment Analyst at Magellan Group, a sponsor of Firstlinks. This article has been issued by Magellan Asset Management Limited ABN 31 120 593 946 AFS Licence No. 304 301 (‘Magellan’) and has been prepared for general information purposes only and must not be construed as investment advice or as an investment recommendation. This material does not take into account your investment objectives, financial situation or particular needs.

For more articles and papers from Magellan, please click here.